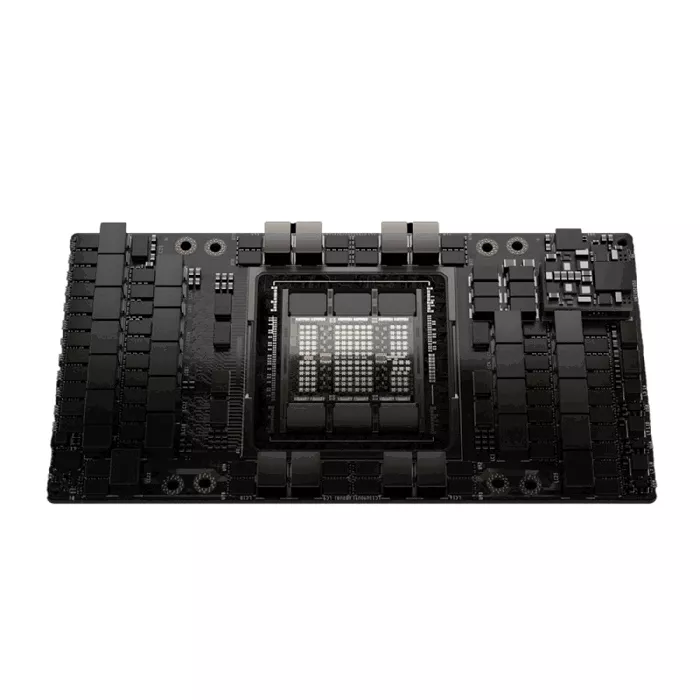

NVIDIA H100 Tensor Core GPU 80GB SXM

H100 features fourth-generation Tensor Cores and a Transformer Engine with FP8 precision that provides up to 4X faster training over the prior generation for GPT-3 (175B) models. The combination of fourth-generation NVLink, which offers 900 gigabytes per second (GB/s) of GPU-to-GPU interconnect; NDR Quantum-2 InfiniBand networking, which accelerates communication by every GPU across nodes; PCIe Gen5; and NVIDIA Magnum IO™ software delivers efficient scalability from small enterprise systems to massive, unified GPU clusters.

NVIDIA H100 Tensor Core GPU Resources

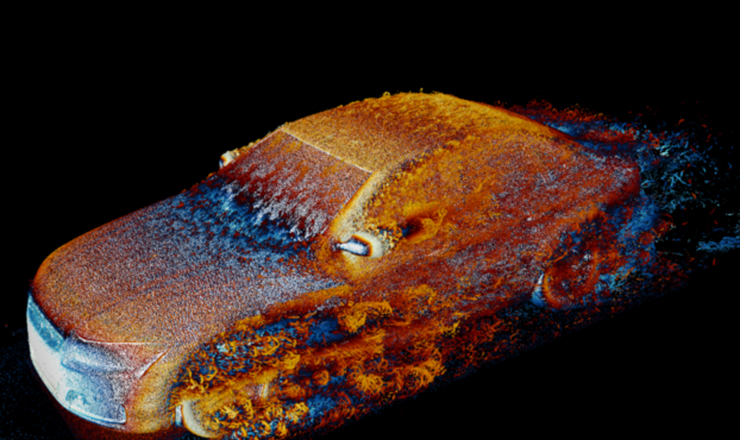

An Order-of-Magnitude Leap for Accelerated Computing

The NVIDIA H100 Tensor Core GPU delivers exceptional performance, scalability, and security for every workload. Built on the NVIDIA Hopper™ architecture, it enables 30X faster large language models and includes a dedicated Transformer Engine to tackle trillion-parameter models with breakthrough efficiency.

Transformational AI Training

Fourth-generation Tensor Cores with FP8 precision and a new Transformer Engine accelerate training of GPT-3 class models by up to 4X. Coupled with 900GB/s NVLink, PCIe Gen5, and NVIDIA Magnum IO™, the H100 scales from enterprise deployments to the largest AI supercomputers in the world.

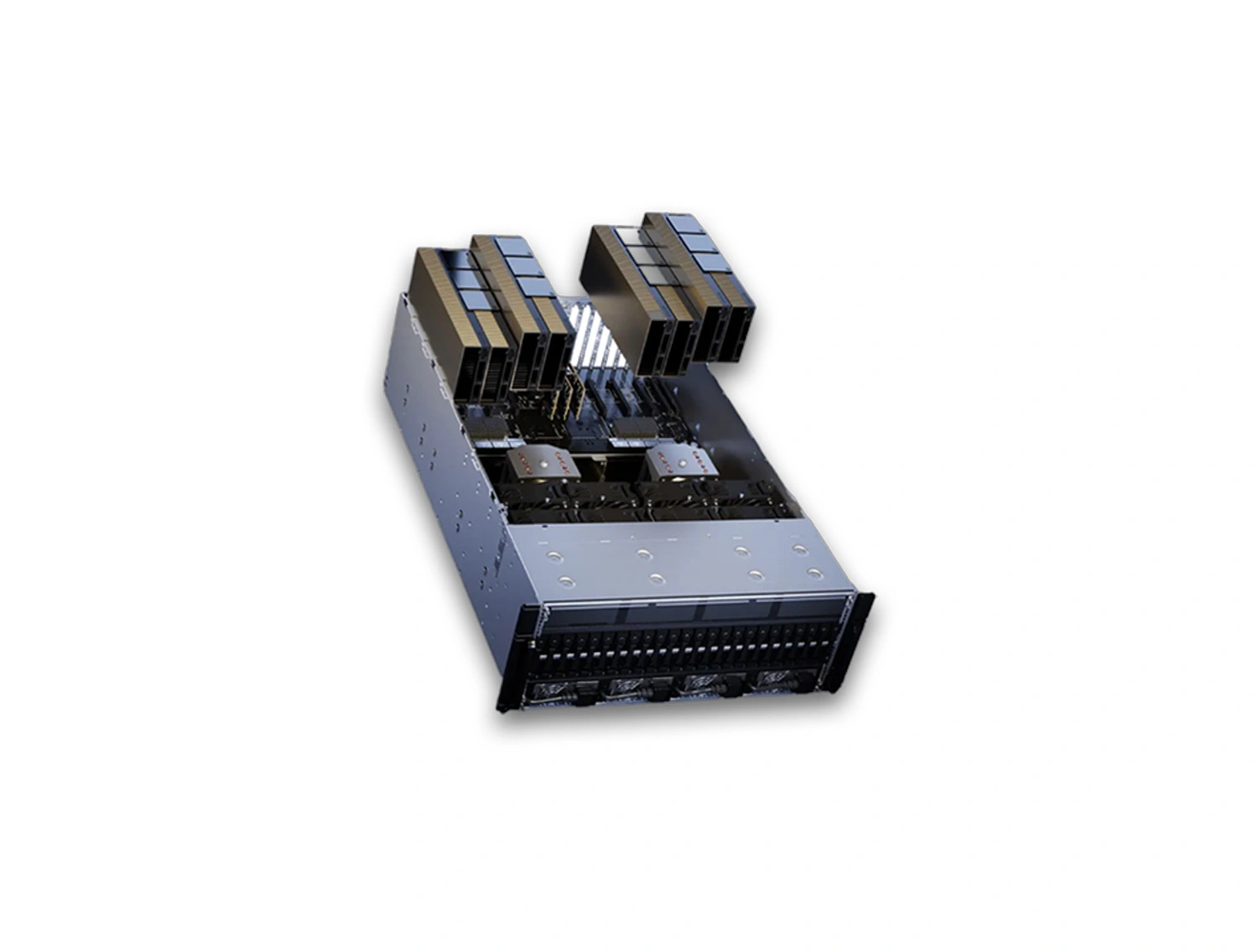

Transform your data center

Accelerate a wide range of demanding workloads with next-gen capabilities, optimized AI inference, and efficient infrastructure utilization. Real-Time Deep Learning Inference: Up to 30X faster inference with support for all precisions: FP64, TF32, FP32, FP16, INT8, and FP8. Exascale HPC: Delivers 60 TFLOPS FP64 and up to 1 PFLOP TF32 throughput; 7X speedup on dynamic programming tasks like DNA alignment. Accelerated Analytics: 3TB/s memory bandwidth, NVSwitch™, and RAPIDS™ for scale-out data pipelines. Enterprise-Ready Utilization: H100 with MIG enables flexible multi-user provisioning and optimized GPU usage. Built-In Confidential Computing: Native security at the silicon level with hardware-based TEE for secure AI at scale. Grace Hopper Superchip: NVIDIA Grace CPU + Hopper GPU combo delivers 10X performance for large-model AI and HPC with 900GB/s chip-to-chip bandwidth

| Condition | Item Condition : Brand New |

|---|---|

| MFG Number | 900-21002-0000-000 |

| Price | $31,250.00 |

| Financing | No |

| Product Card Description | 80GB GPU Memory, 989 TFLOPS TF32, 3.5TB/s Bandwidth—Configurable TDP Up to 700W for Maximum Performance |

| Order Processing Guidelines | Order Processing Guidelines:

Inquiry First – Please reach out to our team to discuss your requirements before placing an order. |

| Special Price From Date | Jul 31, 2024 |

| Special Price To Date | Aug 19, 2024 |