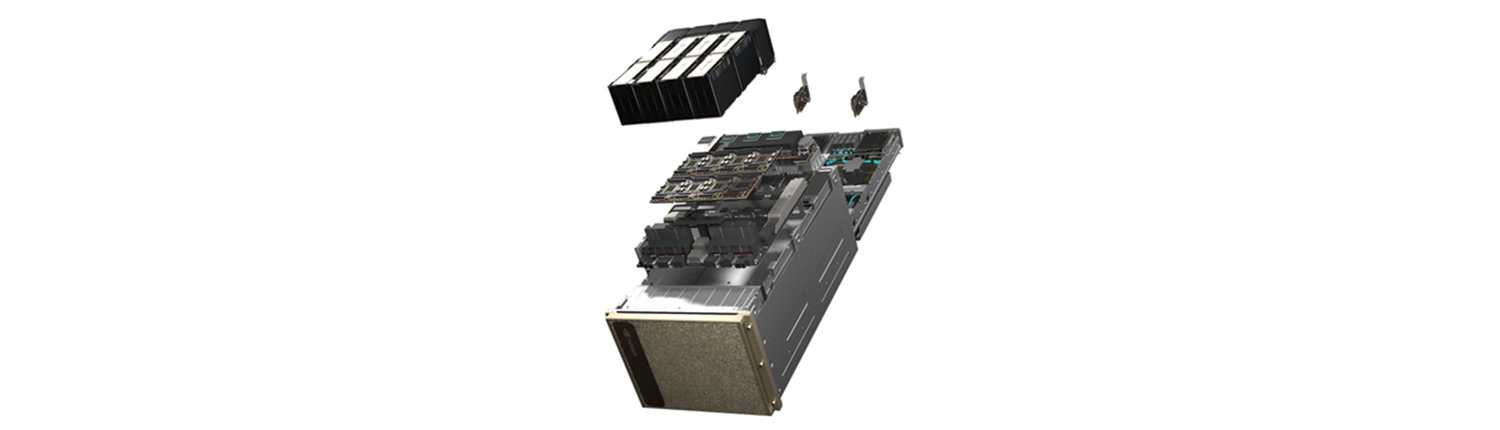

NVIDIA DGX H100 AI Server

The NVIDIA DGX H100 AI Server delivers breakthrough performance for AI, deep learning, and large-scale machine learning workloads. Featuring NVIDIA H100 Tensor Core GPUs, it provides enterprises with the fastest path to scalable AI innovation and research.

Enterprise Infrastructure to Power AI Factories

NVIDIA DGX SuperPOD™ provides leadership-class AI infrastructure with agile, scalable performance for the most challenging AI training and inference workloads. Available with a choice of NVIDIA Blackwell-powered compute options in the NVIDIA DGX™ platform, DGX SuperPOD isn’t just a collection of hardware, but a full-stack data center platform that includes industry-leading computing, storage, networking, software, and infrastructure management optimized to work together and provide maximum performance at scale.

The Proven Standard for Enterprise AI

Built from the ground up for enterprise AI, the NVIDIA DGX™ platform, featuring NVIDIA DGX SuperPOD™, combines the best of NVIDIA software, infrastructure, and expertise in a modern, unified AI development solution—powering next-generation AI factories with unparalleled performance, scalability, and innovation.

The Most Complete AI Platform

With NVIDIA DGX SuperPOD™, enterprises gain access to a holistic AI ecosystem built to accelerate AI innovation at scale. NVIDIA AI software solutions. Build your AI Center of Excellence on NVIDIA DGX H100 AI servers. Fully integrated with NVIDIA Base Command™ and AI Enterprise software

Leadership-Class Infrastructure

Experience the power of the NVIDIA DGX H100 AI server in a multitude of ways that fit your business: on premises, co-located, rented from managed service providers, and more. And with DGX-Ready Lifecycle Management, organizations get a predictable financial model to keep their deployment leading-edge.

| MFG Number | DGXH-G640F+P2CMI36 |

|---|---|

| Condition | Item Condition : Brand New |

| Price | $350,000.00 |

| Show Product Will Be Available Soon on FE | No |

| Product Card Description | Enterprise-grade AI server powered by NVIDIA H100 GPUs for unmatched performance in deep learning and machine learning. |

| Order Processing Guidelines | Order Processing Guidelines:

Inquiry First – Please reach out to our team to discuss your requirements before placing an order. |